Intel launched its eighth-generation processors in August this year, but these weren’t the 10nm process chips most techies were looking for. The chipmaker had originally forecasted that it would have 10nm chips ready at the end of 2016, which is roughly two years after the launch of their 14nm process based chips as per the usual tick-tock cycle. But we all know hardware is hard, especially when it needs to be engineered and fabricated at the nanometre scale!

For its part, Intel says that it’s looking at things from a user’s perspective instead of a strictly technical one, and that 40 per cent upgrade in performance is significant enough for a generational rebranding. But is it enough to beat the other high-performance contenders like AMD’s Ryzen? Or more importantly, which one would be an optimal pick for your next high-performance computing project?

Let’s take a look.

Do you need peak graphics performance for virtual reality?

If yes, you should be looking at central processing units (CPUs) that can support two-way scalable link interface (SLI) and up to 64GB of DRAM. SLI is a multiple graphics processing unit (GPU) technology that allows users to increase graphics computing capability on a computer. How does it work? Parallel processing is used to link two or more video cards together in order to produce a single much more powerful output.

Currently, Ryzen 7 1800X chip tops AMD’s Ryzen 7 range. It has eight processor cores, 4GHz Turbo Core clock speed and Socket AM4 package. The AM4 is a microprocessor socket from AMD that has 1331 pin slots and supports double-data-rate DDR4 memory.

While this chip does have a thermal design power (TDP) of 95W, a dual-GPU setup on an AM4 motherboard platform will completely take care of things as long as you keep the processor under 95°C. The chip uses a new cooling system. In fact there are very good cooling options available from brands like AMD’s Wraith, Thermaltake and Swiftech. AGESA (AMD Generic Encapsulated Software Architecture) software updates will also continue to improve performance as the newer versions keep coming in.

An alternative is to use Kaby Lake-X based Core i7-7740X high-end chip, which when used with X299 motherboards can deliver some serious computing power. Launched earlier this year, this chip has four cores and eight threads, and 4.5GHz of maximum Turbo clock speed. However, its TDP is 112W. The maximum temperature allowed on the processor die is 100°C, so your cooling system better be a really good one.

Overclocking helps to improve value for money

Those who are familiar with overclocking can save a good amount of money while having some fun. In overclocking, you increase the operating frequency of your CPU and memory in order to get a faster computer. It involves tweaking the core voltage and cooling systems as well so that your faster machine remains reliable. A bad overclock can cause your computer to overheat and shut down, among other things.

AMD Ryzen Master Utility can be used to overclock easily on Ryzen chips. For example, Ryzen 7 1700 can be worked on and clocked up to compete with the much more expensive 1800X! With Intel silicon, an alternative is to get Core i7-7700K Kaby Lake-S processor. This chip works with a cheaper Z270 motherboard and lower TDP of 91W, and can be overclocked to almost 5GHz if you have the skills and patience.

If you are looking for even more value for money, AMD Ryzen 5 1600 is a better solution than similarly priced Intel Core-i5 7500 chips since AMD chips come with 12 threads as against Intel chips’ just four threads. This should not be a problem for older software that rely on single-thread performance, but will significantly impact others.

AMD Ryzen Threadripper

Do you need heavyweight processing?

The new Ryzen Threadripper 1950X is a 16-core, 32-thread monster with 64 PCIe lanes that run its cores at 4GHz on Turbo. While the maximum temperature is 68°C, its default TDP is a whopping 180W!

Ryzen chips are also said to integrate some machine learning, which allows the CPU to perform better in recurring tasks. On the other hand, Intel’s 10-core Core-i9 7900X runs at 4.3GHz with TDP of 140W. It comes with 44 PCIe 3.0 lanes and supports AVX-512.

Server-grade capabilities available here

A scalable foundation for the modern data centres requires interconnecting on-chip components to improve efficiency and scalability of multi-core processors. Intel’s new mesh network allows scalability within one large piece of silicon, while AMD’s multi-silicon strategy uses its new Infinity Fabric for scale.

Both these can be seen as evolutions of the Front Side Bus (FSB) or HyperTransport technology. The Infinity Fabric allows full utilisation of the DRAM on a system-on-chip (SoC) or GPU, which means theoretical performance limits will be achievable easily.

AMD’s EPYC and Ryzen are built with blocks called CPU Complex (CCX), which consist of ‘Zen’ cores connected with an L3 cache. Interestingly, while each core has its own 2MB of L3 cache, it can also access the other 6MB of cache quickly.

There are two CCX blocks in each die, while one complete EPYC chip is a multi-chip module (MCM) made of four such dies. The EPYC 7601 has 32 cores running at a maximum frequency of 3.2GHz, 64MB of L3 cache and TDP of 180W.

In the Skyake-SP Xeon Scalable Processor family, Intel added 768kB of L2 cache per core, changed the way L3 cache works and added a second 512-bit AVX-512 unit. Intel AVX-512 enables twice the number of floating-point operations per second (FLOPS) per clock cycle compared to what we had before.

Anandtech notes that “even in the best-case scenario, some of the performance advantage will be negated by the significantly lower clock speeds (base and turbo) that Intel’s AVX-512 units run at due to the sheer power demands of pushing so many FLOPS.”

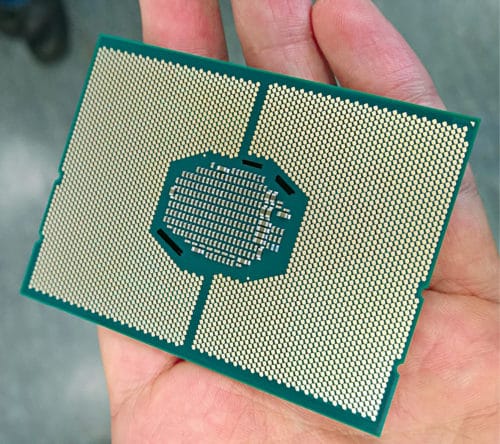

The Intel Xeon Platinum 8180 has 28 cores and 56 threads being pushed at 3.80GHz, 380.5MB of L3 cache and TDP of 205W.

Intel Xeon Platinum

Memory on workstations

While the consumer CPUs mentioned earlier can accept up to 128GB of DRAM, AMD’s EPYC ecosystem can handle up to 2TB per socket when using 128GB LRDIMMs. On the other hand, Xeon-W 2123 processors can support up to 512GB of DDR4 memory, while Xeon-SP processors start at 768GB DRAM and can be extended up to 1.5TB through OEMs.

When you need massive parallelism

For researchers working on machine learning or deep learning, there are some specialist chips available like Pascal-architecture-based Tesla P100 or Vega-architecture-based Radeon Instinct MI25. While Tesla P100 delivers 9.3 teraflops for PCIe-based servers and 10.6 teraflops for NVLink-optimised servers in single-precision performance, Instinct MI25 delivers 12.3 teraflops. The P100 also delivers 4.7 teraflops of double-precision performance, while the MI25 does 768 gigaflops. (These figures have been taken from the respective vendors’ websites.)

What’s next?

There are three interesting things to watch out for. The most obvious one is how Ice Lake, successor to the 8th-generation Intel Core processor family, performs when it probably becomes available in devices in 2019. These processors will utilise Intel’s 10nm+ process technology. Note that Kaby Lake is Intel’s third Core product produced using a 14nm lithography process, specifically the second-generation 14 PLUS (or 14+) version of Intel’s 14nm process.

Next is how AMD’s Zen family evolves further, since Jim Keller, who led the Zen architecture design since 2012, left for Tesla two years ago. Finally, it is to be seen how ARM-based solutions like Falkor-core-based Centriq 2400 from Qualcomm rise up to the challenge of x86 processors in the server market.