A massive revolution is near as smart devices proliferate the world, driven by technology trends like ubiquitous connectivity, cloud computing, artificial intelligence (AI) and low-cost sensors. Existing products are getting smarter, and a whole new range of devices is emerging that can make lives more convenient, safer and entertaining.

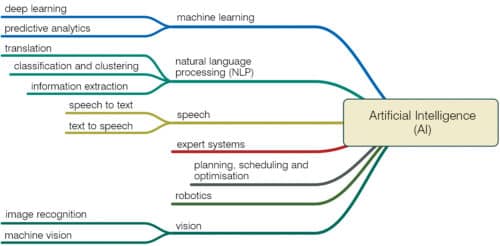

Artificial intelligence (AI) is becoming widespread in all computing applications. Devices and machines are getting smarter as machine learning (ML) algorithms process and analyse large quantities of data to learn and make decisions autonomously with human-like intelligence. Companies like Arm are bringing AI to trillions of Edge devices by adding ML capabilities to processor technology and software, making them smarter, more energy-efficient and more affordable.

AI platforms supporting ML features like predictive text, voice assistants, computational photography and speech recognition are revolutionising how people interact with technology and the way technology interacts with the world. AI systems are being utilised in applications that require the highest levels of reliability and fail-safe ability, including healthcare, autonomous vehicles, consumer robots, etc. AI enables humans to do tasks that were hard to imagine and involved risks.

AI and ML techniques are making designing methods and approaches easier and more advanced for designers. Neural networks, deep ML, augmented reality (AR), virtual reality (VR) and mixed reality (MR) are some of the outstanding advancements that AI has made in design and development industries.

Why move towards AI-powered devices

AI at the Edge is enabling organisations to use massive amounts of data collected by sensors and devices for smart manufacturing, healthcare, aerospace and defence, transportation, telecom and cities to provide engaging customer experiences. Sensors collect such data as sound, vibration, movement and temperature, and analyse it to notify about relevant occurrences in real time. Rise of autonomy in Edge environments, like smart home assistants and autonomous robots, requires the most advanced computing platforms. Other areas that can use AI’s potential in an innovative way are:

- Neural networks can utilise AI to identify various behaviours and use this technology in Edge computing (sensors) to make themselves smart and self-reliant.

- AI can be used in aviation applications to greatly decrease runway inspection time, increase obstacle detection and reduce chances of flight delays.

- AI-based automated optical inspection systems can deliver higher levels of inspection and improve inspection accuracy in manufacturing applications.

- AI at the Edge can be used for traffic monitoring and analytics at road intersections can help avoid road accidents.

- In retail and logistics applications, autonomous mobile robots powered by AI can pick and prepare orders, replenish store shelves and deliver packages to customers.

Role of computing processors

AI redefines device capabilities through the growing design and development ecosystem that has extended its reach from smartphone central processing unit (CPU) design and app development to ML. ML engines process metadata from operating systems and applications to detect cybersecurity threats, and optimise operations of devices and networks in which these operate.

AI-powered devices built with CPUs and microcontrollers (MCUs) handle much of AI and ML workloads at the Edge. Their architecture supports diverse AI applications and the specific requirements these need to run. Extremely power-efficient MCUs are required for AI algorithms in highly-constrained, battery-powered Edge devices, such as wearables and sensors. High performance, energy-efficient media processors are required for mobile and consumer devices like tablets, smartphones, cameras and smart TVs.

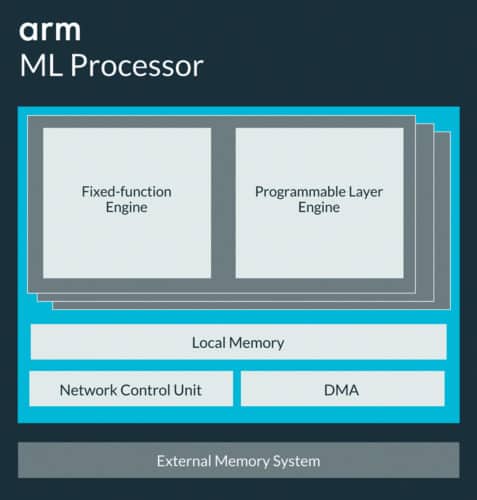

The Internet of Things (IoT) platforms, combined with a new class of advanced and ultra-efficient ML processors, are transforming the IoT into a global network of securely-managed devices.

An ML processor’s optimised design enables new features, improved user experience and delivers innovative applications for a wide range of market segments. It provides a massive uplift in efficiency compared to CPUs, graphics processing units (GPUs) and digital signal processors (DSPs) through efficient convolution, sparsity and compression.

An onboard processor and AI engine can increase computing speed. By integrating an advanced image signal processor into an AI engine, the platform’s processing time can be improved, as it does not need to communicate as much with a distant server. Enabling devices to process data locally reduces the burden on networks, which is important as the number of connected devices continues to grow.

“Recent Edge computing innovations equip algorithm-powered devices with complicated computation and storage capabilities to perform mission-critical functions that are closer to the point of impact. An integrated model of cloud and Edge computing working hand in hand allows us to optimise compute, store and network resources, and deliver the best AI performance,” says Dr Yangdong Deng, Tsinghua University.

Impact of AI on the design industry

Learning and keeping up with advanced designing tools and methods have now become crucial for designers. Without knowing these advanced tools and software, it is impossible for them to succeed.

Technologically-advanced tools and applications have automated several small tasks like setting up the grid, rules and scales. With fewer tasks at hand, designers can now focus more on the design and decide the best-fit design elements than on the arrangement of those elements. This helps them carry out several tasks in the set time frame, that too with accuracy and perfection.

Designers of the future will be more creatively, technologically and intellectually advanced—all they will need to do is counter-design issues and problems. Almost half of the designing tasks will be automated through AI, making systems more robust and efficient.

AI can detect and analyse patterns and trends on its own and optimise the design system accordingly. Designing apps and tools are much more intelligent than these were ever before. AI-powered tools can also determine the best filter and visual effect to apply to images.

Jacky Wan, vice president of engineering for Arrow’s components business in Asia-Pacific region, says, “We are collaborating with Thundersoft to assist developers and engineers to integrate AI technology into Edge/gateway devices. This will empower developers and manufacturers to train and verify algorithms, and create AI applications quickly. It can also assist them in building an optimal intelligent system that can handle speech recognition, complex event/data processing and computer vision features on Edge/gateway devices.”

Latest design developments in the industry

AI is being adopted globally by all industries to drive efficiency, improve productivity and reduce costs. AI-driven systems and applications are making railway operations safer, smarter and more reliable, significantly enhancing passenger travel experience and freight logistics services. These AI-driven applications only function with proper data input that is collected by a large number of IoT devices and cameras installed in stations, on trains and along tracks. Target applications include, but are not limited to, passenger information systems, railroad intrusion detection, train station surveillance, onboard video security and railroad hazard detection.

Crystal Tseng, senior product manager, ADLINK, says, “We believe that AI can transform traditional rail operations by driving improvements including fast and convenient ticket-free check-in, real-time tracking of health diagnostics, personalised infotainment and onboard services, accurate arrival time predictions and rapid response in an emergency.” ADLINK’s PIS-5500 intelligent platform is installed on special rail inspection trains to process captured images of key wayside equipment in real time. The application can not only detect suspicious behaviour and trigger alerts but also conduct post-event analysis.

Smart cameras will play a big role in smart cities and connected industrial facilities, and increasingly large streams of data will need to be sent over networks as a result. This could result in slower transmissions, which would limit the functionality of connected devices.

Bringing processing and analytics onto cameras allow them to process in real time without having to wait for data to be sent between devices and the server.

Qualcomm has released its next-generation AI-powered camera design platform using its latest purpose-built systems-on-chip (SoC) to better enable on-device ML and processing capabilities. The platform is designed for Edge computing use-cases that need support for video processing and analytics. The SoC can be applied to an array of devices including smart security cameras, smart displays, sports cameras, robotics, inventory management tools, wearable and body cameras, and dash cams.

Smart cameras that contain MediaTek’s Helio P90 SoC support faster, complex and more dynamic AI experiences such as human pose detection, which can track and analyse body movements. These also support Google Lens, real-time beautification, deep-learning facial detection, artificial reality and mixed reality acceleration, object and scene identification, and other real-time enhancements for photos and videos.

ST’s embedded AI technologies make neural networks run on STM32 MCUs, reducing network bandwidth by performing processing on the Edge side.

A massive revolution is coming as smart devices proliferate the world, driven by technology trends like ubiquitous connectivity, cloud computing, AI and low-cost sensors. Existing products, ranging from cameras to audio speakers, are getting smarter and a whole new range of devices is emerging that can make lives more convenient, safer and entertaining. With upgradation in AI powered devices, we will be able to automate most of the tasks in every field.