This article demonstrates real-time training, detection and recognition of a human face with OpenCV using the Eigenface algorithm. Let us construct this OpenCV Face Recognition System below.

This article demonstrates real-time training, detection and recognition of a human face with OpenCV using the Eigenface algorithm. Let us construct this OpenCV Face Recognition System below.

There are various biometric security methodologies including iris detection, voice, gesture and face recognition, and others. Among these, face recognition plays a vital role and is one of the emerging technologies for security applications. To begin with, we need to understand the logic of training, detection and recognition of human faces.

Face detection

Fortunately, a human face has some easily recognisable features that cameras can lock on to—pair of eyes, nose and mouth. By being able to detect a face in a scene, the camera can concentrate its autofocus on the user’s face to ensure it is the primary subject in focus within the image.

Once a face has been detected, the system tracks that face as best it can. If the user turns away, detection lock is lost. But thanks to some camera manufacturers who have enhanced the detection capability of their devices, a lock can be maintained even when the subject has turned to the extent that only one remains visible.

If several faces are detected, not all of them can be in focus. Some face detection modes can lock onto several faces, in which case the primary subject of focus will usually be the closest or the most prominent face. This can be overridden by selecting a face manually.

Face recognition

Face recognition is an enhanced form of face detection. Instead of simply letting the camera recognise any face in the crowd, the camera can be configured to recognise a particular person’s face and use that as the focal point. You can take photos and save these in the memory. This could be a useful tool when searching for images of a particular person who was recognised by the camera at the time the picture/video was taken.

Face recognition can be implemented using many algorithms like Eigenface, Fisherface, local binary patterns histogram (LBPH) and so on. Eigenface was the first successful technique used for face recognition. It uses principal component analysis (PCA) to project an image to a low-dimension feature space.

Fisherface method is enhancement of Eigenface that uses Fisher’s linear discriminant analysis (FLDA).

Eigenface algorithm

In Eigenface algorithm, Eigenface denotes a set of Eigenvectors. These are used in computer vision for human face recognition.

A set of Eigenfaces can be generated by performing a mathematical process of PCA, where it identifies variations in face images in an entire image space as a single point in n×n-dimensional image space. These vectors are called Eigenvectors. Since we have not used any high-resolution digital camera to record images in this demonstration, space occupied by every single face is small.

Software

A system loaded with Ubuntu 18.04, or Raspberry Pi installed with NOOBS operating system of any version of Raspbian, or Debian and an external USB camera (optional) can be used. (Testing was done using a laptop with inbuilt camera and Ubuntu 18.04 at EFY Lab.)

To install OpenCV, type the following commands in the terminal:

$sudo apt-get update

$sudo apt-get upgrade

$sudo apt-get install python-opencv

$sudo apt-get install libopencv-dev

These will install all libraries required for running OpenCV and Python. To check whether OpenCV has been installed, start Python interpreter from the terminal by typing the following:

$python

>>> import cv2

>>>

If there is no error message, it means OpenCV has been installed successfully.

Download all relevant files, including main face recognition program (gui.py), written in Python, from the source code folder available on source.efymag.com. Then, extract the folder to your system, where you will find an empty folder called datasets. Browse the extracted folder from the terminal to run the face recognition program as explained in the testing steps below.

Testing steps for OpenCV Face Recognition System:

The steps are as follows:

1. Create a subfolder (say, sai) inside the datasets folder for the user whose face is to be trained.

2. Run Python-based face recognition program. For that, type the command given below.

$ python gui.py

You will see a welcome GUI window as shown in Fig. 1.

In the GUI, type the same name (sai) created as a subfolder inside the datasets folder. Press ‘train’ to start the face training program. The camera app will turn on and the program will start to capture video frames of the face.

You can sit in front of the camera while it is capturing the frames during training. It captures 100 photos with different facial expressions. The reason for capturing 100 frames is to make sure that values of different facial expressions shown by the user are properly stored in the program, and to make the program easily recognise and retrieve the user’s face in future. But note that, only the front of the face (facing the camera) is considered in this algorithm.

3. Once about 100 frames of the face have been captured, eigen_train_face.xml file will be generated automatically, where you will find the values of the faces being captured. Then, to close train program, press q on the keyboard while the camera app is still running.

(When you want to train again, the xml file will not be recreated, it will just get updated.)

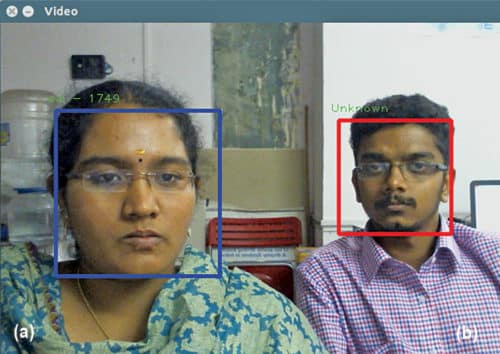

4. Once the camera app is closed, press ‘recognize’ and the camera will start to recognise the faces from the database of captured frames. That is, the program will start matching the current face with the faces stored in the database. If a match is found, it will match it with the user’s name along with the confidence value (Fig. 2).

If a match is not found, it will automatically display ‘unknown’ on the screen. Numerals depicted along with the name show the confidence level of the training, which, in turn, depends on various parameters like lighting, clarity of frames taken, and usage of right haar cascade face detection config and .xml files.

Training should be done for one person at a time so that the program can train and recognise the faces efficiently. This program can be further improved with more extraction features and added facilities.

Download source folder for OpenCV Face Recognition System: click here

Saipriya Mukundha Prabhu is a robotics engineer at Bluetronics, Bengaluru. Her interests include robot operating systems, image processing and the Internet of Things (IoT)

hi when click train i am having this error please help me fix it

File “train_eigen.py”, line 104, in

trainer = TrainEigenFaces()

File “train_eigen.py”, line 19, in __init__

self.model = cv2.face.createEigenFaceRecognizer()

AttributeError: ‘module’ object has no attribute ‘createEigenFaceRecognizer’

Hi Ali,

try changing this line self.model = cv2.face.createEigenFaceRecognizer() to

self.model = cv2.face.LBPHFaceRecognizer_create();

if this error is still not solved, let me know the python version, opencv version you use.

hi iam not abel to find the sourcecode could you send me to my mailid?

its – [email protected]

thank you

Please refresh the page and re-download.