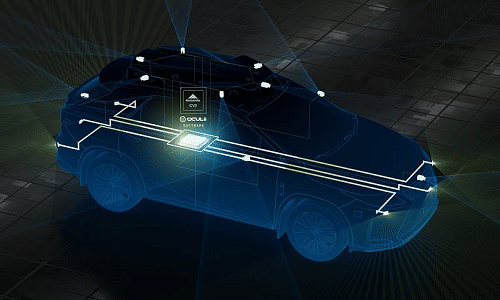

Ambarella’s centralized 4D imaging radar with Oculii technology provides a flexible and high-performance perception architecture that enables system integrators to future-proof their radar designs.

Ambarella’s centrally processed 4D imaging radar architecture can enhance the safety and driveability of autonomous vehicles. It is the world’s first centralized 4D imaging radar architecture, which allows both central processing of raw radar data and deep, low-level fusion with other sensor inputs—including cameras, lidar and ultrasonics. This architecture will enable higher-performance imaging radar systems and new ADAS/AD features while simultaneously optimizing the cost of radar sense. The 4D imaging radar architecture is suitable for use in level 2+ to level 5 autonomous vehicles, as well as autonomous mobile robots (AMRs) and automated guided vehicle (AGV) robots.

“No other semiconductor and software company has advanced in-house capabilities for both radar and camera technologies, as well as AI processing,” said Fermi Wang, President and CEO of Ambarella. “This expertise allowed us to create an unprecedented centralized architecture that combines our unique Oculii radar algorithms with the CV3’s industry-leading domain control performance per watt to efficiently enable new levels of AI perception, sensor fusion and path planning that will help realize the full potential of ADAS, autonomous driving and robotics.”

The data sets of other competing 4D imaging radar technologies are too large to transport and process centrally. They generate multiple terabits per second of data per module while consuming more than 20 watts of power per radar module, due to thousands of MIMO antennas used by each module to provide the high angular resolution required for 4D imaging radar. That is multiplied across the six or more radar modules required to cover a vehicle, making central processing impractical for other radar technologies, which must process radar data across thousands of antennas.

Oculii technology reduces the antenna array for each processor-less MMIC radar head in this new architecture to 6 transmit x 8 receive. They achieve this by applying AI software to dynamically adapt the radar waveforms generated with existing monolithic microwave integrated circuit (MMIC) devices, and using AI sparsification to create virtual antennas. Target applications for the new centralized radar architecture include ADAS and level 2+ to level 5 autonomous vehicles, as well as autonomous mobile robots (AMRs) and automated guided vehicle (AGV) robots.