An electronic skin that learns from ‘pain’ could aid in the development of a new generation of intelligent robots with human-like sensitivity.

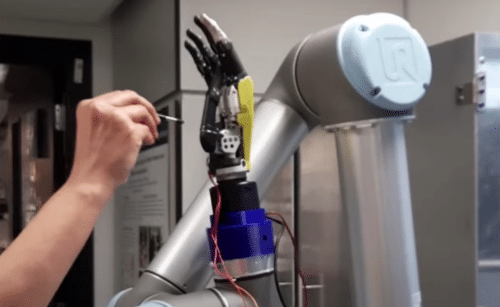

This type of artificial skin was created by a team of engineers from the University of Glasgow using a novel sort of processing system based on ‘synaptic transistors,’ which replicates the brain’s neural networks in order to learn. A smart skin-enabled robot hand demonstrates a surprising capacity to learn to adapt to environmental inputs. The researchers detail how they created their prototype computational electronic-skin (e-skin) and how it improves on the present state of the art in touch-sensitive robotics in a new study published today in the journal Science Robotics.

The Glasgow team’s new form of electronic skin is inspired by how the human peripheral nervous system processes impulses from skin. The researchers printed a grid of 168 synaptic transistors manufactured from zinc-oxide nanowires directly onto the surface of a flexible plastic to create an electronic skin capable of a computationally efficient, synapse-like response. The synaptic transistor was then attached to a skin sensor located on the palm of a fully articulated, human-shaped robot hand.

When the sensor is contacted, it measures a change in electrical resistance – a little change corresponds to a light touch, while a bigger change corresponds to a harder touch. This input is intended to resemble how sense neurons function in the human body. A circuit placed into the skin functions as an artificial synapse, reducing the input to a single spike of voltage whose frequency fluctuates depending on the amount of pressure applied to the skin, thus speeding up the reaction process.

The team trained the skin to respond appropriately to simulated pain using the varied output of that voltage spike, which then triggered the robot hand to react. The team was able to make the robot hand recoil from a severe stab in the palm’s centre by selecting a threshold of input voltage to elicit a reaction. In other words, it learned to walk away from a simulated source of discomfort using an inbuilt information processing system that mimicked the human nervous system’s operation.

Professor Dahiya, of the University’s James Watt School of Engineering, said, “We all learn early on in our lives to respond appropriately to unexpected stimuli like pain in order to prevent us from hurting ourselves again. Of course, the development of this new form of electronic skin didn’t really involve inflicting pain as we know it – it’s simply a shorthand way to explain the process of learning from external stimulus.”

Fengyuan Liu, a member of the BEST group and a co-author of the paper, added, “In the future, this research could be the basis for a more advanced electronic skin which enables robots capable of exploring and interacting with the world in new ways, or building prosthetic limbs which are capable of near-human levels of touch sensitivity.”

Click here to view their demo on YouTube.