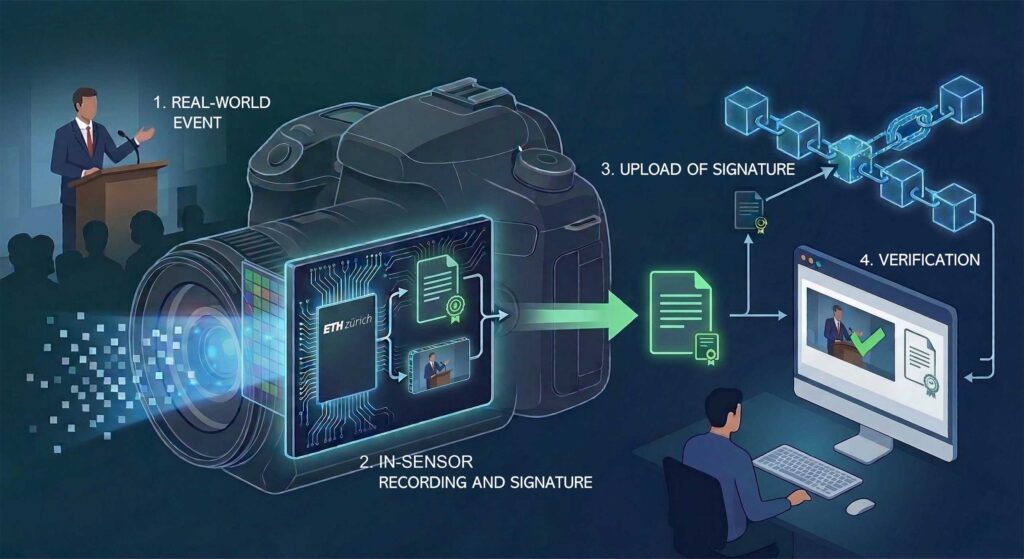

Hardware-level cryptographic signing enables real-time verification of the authenticity of images, video, and audio.

Researchers at ETH Zurich have developed a chip-based solution to detect deepfakes by verifying the authenticity of digital content at the point of capture. The approach shifts deepfake detection from post-processing software to hardware-level validation, offering a more robust defence against AI-generated manipulation.

The technology integrates cryptographic functions directly into sensor chips, allowing images, videos, and audio signals to be digitally signed the instant they are recorded. This signature serves as a secure identifier, confirming the data’s origin, timestamp, and integrity. Any subsequent alteration invalidates the signature, making tampering immediately detectable.

Unlike conventional methods that attempt to detect manipulated media after distribution, the approach embeds trust at the source. By creating a hardware-rooted “chain of authenticity,” the system ensures that content carries verifiable proof of origin from the moment it is generated. This significantly reduces reliance on software-based detection tools, which often struggle to keep pace with increasingly sophisticated deepfake techniques.

To enable scalable verification, the chip-generated signatures can be stored in tamper-resistant public ledgers such as blockchain systems. This allows platforms, institutions, or end users to independently verify whether a piece of content is genuine by matching it with its original signature.

The implications for electronics and digital infrastructure are substantial. The sensor-level integration means the technology can be embedded into a wide range of devices, including smartphones, cameras, and IoT systems. This opens pathways for automated authenticity checks across social media, journalism, legal evidence, and scientific data workflows.

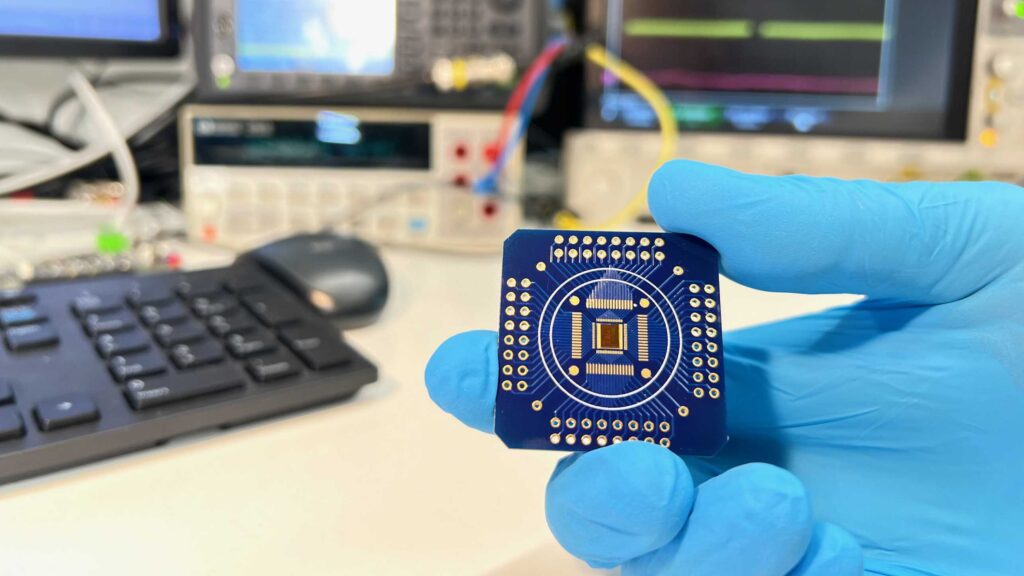

Currently demonstrated as a prototype, the chip validates the feasibility of embedding security directly into sensing hardware. However, further work is required to optimise cost, scalability, and integration for commercial deployment.

As deepfake content continues to erode trust in digital media, hardware-based verification architectures such as this could become a foundational element in next-generation secure electronics, ensuring authenticity is built into data at its origin rather than verified after the fact.