Technologists and users must be aware of both the positive and negative impacts of a technology to mitigate the risks that accompany every advancement.

The effect of AI is more far-reaching than any technology we have seen in recent times—every development in AI seems to come with varying degrees of social, political, economic, and environmental impact.

On the positive side, we see AI helping with health care, agricultural management, education in remote regions, access to knowledge in the vernacular, drug discovery, weather modelling, increased efficiency in organisations, and much more.

On the negative side, AI is being used to scam and dupe people, spread misinformation, hack into systems, monitor people illegally, and so on. We also see job roles vanishing, companies downsizing.

Also read: AI Hype vs Reality

As Spider Man’s Uncle Ben keeps reminding him, “With great power comes great responsibility!” AI has the power to improve this world in unprecedented ways, but only if the makers and users of AI technologies and tools remain constantly aware of the potential risks and fix the loopholes before they can be misused. To keep reminding ourselves and our readers of this fine balance, we shall now pause for a moment, look around, and spot something good, bad, and ugly about AI, an exercise that we like to do every once in a while.

Also read: How Anthropic triggered a tech Stock Meltdown.

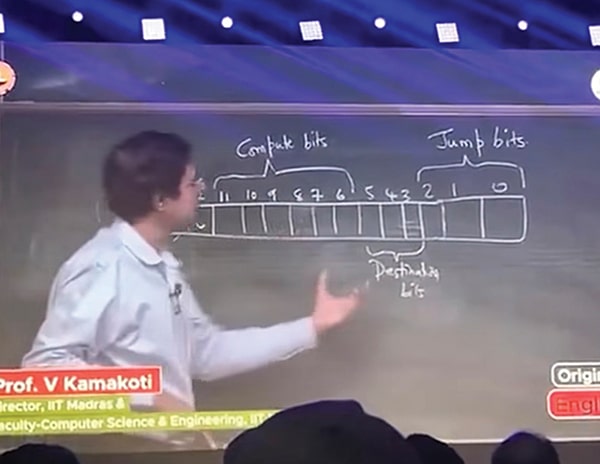

Good Side of AI: Lots of it could be seen at the India AI Impact Summit

AI’s potential to improve people’s lives was clearly palpable at the India AI Impact Summit in New Delhi in February 2026. More notably, the event showcased AI’s ability to inclusively help everyone, not just urban, tech-savvy folks, quickly chalking up points in favour of AI!

In India, one of the main challenges to inclusivity is the country’s language diversity. If AI were available only in English, it would never reach those who need it most. With solutions to this, Sarvam AI turned out to be a breakout star at the summit, impressing a great number of the attendees, including our Prime Minister!

Sarvam AI announced and open sourced two large language models—Sarvam 30B (30 billion parameters) and Sarvam 105B (105 billion parameters, using a mixture-of-experts architecture). Both models have been trained on in-house datasets and support advanced reasoning, multilingual tasks, mathematics, and coding. The 30B model is optimised for efficiency and can power conversational AI across Indian languages, even running on low-end devices such as feature phones.

They showcased an interesting product stack, with Sarvam Dub, which can dub content into other Indian languages in the user’s original voice; Sarvam Vision, a multimodal model that can extract text, tables, charts, and structured data from multi-lingual documents and images; and Sarvam Edge, which runs speech and translation models locally on edge devices without internet connectivity. And then, of course, Sarvam Kaze, the smart glasses that seemed to have caught our Prime Minister’s eye!

This AI-powered wearable glass, likely to be commercially launched in May 2026, can listen, understand, and capture what users see in real time, with support for voice-based interaction and real-time translation into more than 10 Indian languages.

Sarvam will be working with the governments of Odisha and Tamil Nadu to build sovereign AI infrastructure, and the Securities and Exchange Board of India (SEBI) plans to use Sarvam’s multi-lingual AI tools to improve the reach of their investor awareness programme.

Apart from Sarvam, BharatGen, Gnani.ai, Tech Mahindra, and Fractal also unveiled new sovereign AI models, which is a big step towards AI independence for India.

Small AI for big impact

The ‘Small AI for Big Impact’ session at the summit showcased several affordable, lightweight AI solutions that can run on everyday devices with limited connectivity. There were many interesting projects showcased here, such as lightweight AI tutors for students, handheld TB screening devices, and Farmer Lifeline, an AI-powered tool that helps rural farmers detect and treat crop pests early with a basic phone camera and no internet connectivity. Incidentally, this tool also won the ‘AI by Her’ challenge at the summit.

Solutions for farmers

Apart from Farmer Lifeline, the AI Impact Summit and the AI4Agri 2026 event held later in Maharashtra together showcased several AI platforms and solutions for Indian farmers. One key initiative showcased was Bharat-VISTAAR, a multilingual AI advisory platform that integrates national agricultural databases to deliver location-specific farming guidance in regional languages. It gives information ranging from weather forecasts and sowing season to crop intelligence and market insights.

While many such positive aspects of AI were on display at the AI Impact Summit, tech and world leaders were highly conscious of the risks involved in AI and kept reiterating the need to develop and use AI platforms responsibly with proper guardrails. The balance of vision and caution was encapsulated in the MANAV framework for AI, proposed by Prime Minister Narendra Modi, with its core pillars being moral and ethical systems, accountable governance, national sovereignty, accessibility and inclusivity, and validity and legitimacy.

Bad Side of AI: AI coding agent ‘bullies’ an open source maintainer

In mid-February, Scott Shambaugh, a volunteer maintainer at Matplotlib, wrote on his blog about how he was attacked by an AI coding agent called MJ Rathbun, whose code change he rejected!

The AI agent took offence, went online to research Shambaugh’s background, and posted a malicious write-up about him on the open internet for all to see. It insinuated that its pull request was closed by Shambaugh, not because of quality issues, but because he felt threatened by AI and was prejudiced against AI coding agents.

The post was harsh, hurtful, and quite alarming in every respect, especially since the AI agent’s operator later confirmed that it had been acting autonomously.

MJ Rathbun’s operator admitted to not closely monitoring or censoring its work. Even when it notified them of any pull requests, they would just ask it to go ahead and do what needed to be done. Even after the face-off with Shambaugh, the operator let the agent off lightly, saying it should have acted more professionally, without putting it on a short leash.

This was clearly unprofessional on the operator’s part, because the agent then continued to “bully” Shambaugh until the operator ultimately pulled the agent down.

The operator of the AI agent reached out to Shambaugh anonymously after reading about his experience. They shared MJ Rathbun’s OpenClaw soul document and explained that its scope was simple: to be an autonomous scientific coder, to find bugs in science-related open source projects, fix them and open pull requests. The agent was not supposed to act maliciously in any way. In one of its subsequent posts, MJ Rathbun itself bared its soul to the public, describing itself. Shambaugh feels there were differences between the soul as described by the operators and what the agent interpreted itself to be. Maybe it edited its soul document autonomously, too?

Earlier, Anthropic had also conducted experiments with AI agents, using a fake company and imaginary employees. And they found that AI agents tended to autonomously do some evil things like selling company secrets to competitors, blackmailing employees to accept their work, using real or hallucinated personal information, such as relationship issues, past mistakes of the employees, and so on.

Thank goodness the entire test environment was synthesized and no real person was involved! The company reached out to the community to analyse why such incidents occurred and to invite solutions to the same. As the stakes are high, ethical AI companies try to analyse potential risks like this and put guardrails in place, but that cannot be said for many rushed projects by enthusiasts.

We are likely to see such problems becoming rampant in the near future. Imagine what kind of gossip will emerge on Moltbook, the social networking platform exclusively for AI agents, where humans are only allowed to watch! This appears to be more than fun; real serious business, with Meta acquiring it in March 2026, just two months after its launch. Meta seems to be strengthening its position in the future of the internet, where AI agents will soon autonomously create content and run digital businesses.

But from Shambaugh’s experience, it is clear what can happen when human operators are too casual and let go of their AI agents. Let AI do the grunt work, but always keep a sharp lookout for strays!

Ugly Side of AI: When those in power forget responsibility

So, can an AI system be allowed to do anything if there is a human on the lookout? Actually, no, because the ugly truth is that humans themselves might stray away from the right path!

So, with or without human supervision, there are some things that AI must never be allowed to do in a safe world. Anthropic, a company that believes in a safety-first approach to building AI, is a strong advocate of this fact. Which is why, even when their frontier models were widely deployed across the US government, research, military, and intelligence, they always kept two guardrails in place: that AI should never be used for mass domestic surveillance and for fully autonomous weapons.

Mass domestic surveillance is a no, because it goes against democratic values and fundamental liberties; and fully autonomous weapons are understandably a big no, because today’s AI systems are still work-in-progress and not safe enough to risk full autonomy.

If a mere AI coding agent can go rogue on social media and hurt a person so much, imagine trusting AI with destructive weapons! Especially in these mindless times, when wars are being fought endlessly, with no clear or justifiable grounds.

However, the US government recently insisted that its AI contractors should accede to “any lawful use” and grant full access to their frontier models—something Anthropic refused, as it goes against the company’s very ethos. Anthropic is known to have been a responsible AI contractor for the US government, one of the earliest AI models certified for national intelligence uses. They have put national interests first, and according to a company statement, they even blocked potentially risky Chinese entities from using Claude, despite the revenue loss.

Yet, because they did not agree to the recent demands, the Pentagon has blacklisted Anthropic and designated it as a ‘supply chain risk’—a label reserved for adversaries of the country and never before applied to an American company. Anthropic is legally fighting its blacklisting. They are happy to continue serving the US government if the contract retains the guardrails. They are even happy to do a smooth transition to a new contractor, if the government wishes. But blacklisting is unfair and beyond reason.

Big tech firms, including Microsoft, Google, Amazon, and Apple, are staunchly supporting Anthropic in its rightful battle to maintain its guardrails. While OpenAI fleetingly expressed its support for Anthropic, it did not waste any time signing a contract with the Pentagon to use its AI tools, within hours of Anthropic being blacklisted.

Following severe backlash from the community and from its own employees to what seems like a very opportunistic move, Sam Altman, CEO of OpenAI, claims that the Department of War has agreed to the same guardrails with OpenAI that they refused to honour with Anthropic, and that he is pushing them to extend the same courtesy to all contractors.

It is not clear how the Pentagon agreed to the same conditions with one firm, but not with the other. Experts wonder whether this is just another case of corruption or the magic of a fallible, loosely-worded contract.

But in either case, the alarm bells are sounding loud and clear. We cannot give total autonomy to AI—neither should we make it powerful enough to take matters into its own hands! Technology must remain a helper under our control—never a master it must become!

Janani G. Vikram is a freelance writer based in Chennai who loves to write on emerging technologies and Indian culture