MIT researchers have developed a touch-sensing robotic hand that accurately recognizes objects with a single grasp, just like humans do.

Robotic hands often incorporate high-powered sensors solely into their fingertips, necessitating complete contact with the object for identification, which may require numerous grasping attempts. Alternatively, some designs employ lower-resolution sensors that span the entire finger, but these capture less information, necessitating multiple grasps.

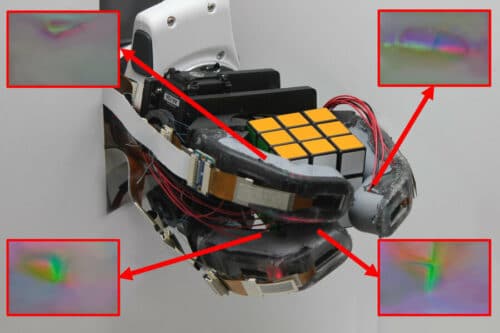

A team of researchers at MIT created a robotic finger that uses high-resolution sensors under soft, transparent skin to detect object shapes. This design records much data on multiple areas of an object at once. A three-fingered robotic hand was created using this design, identifying objects with an 85% accuracy after one grasp. The firm skeleton allows heavy item lifting, while soft skin permits a secure grip on pliable objects without damage. Soft-rigid fingers are helpful in an at-home-care robot for the elderly, able to lift heavy items and assist with bathing using the same hand.

The robotic finger has a 3D-printed endoskeleton covered with transparent silicone skin molded to a curved shape, removing the need for fasteners or adhesives, mimicking human fingers. Each finger’s endoskeleton has GelSight touch sensors in the top and middle sections under transparent skin, with overlapping camera coverage, ensuring continuous sensing. The GelSight sensor uses a camera and three LEDs to capture images while illuminating the skin with colors when grasping an object. Contours on the grasped object’s surface are mapped using illuminated skin, and an algorithm performs backward calculations. The Researchers trained a machine-learning model to identify objects using raw camera images.

However, the problem with fabricating such a skin surface was that silicone tends to wear off over time. Adding curves to joint hinges reduced silicone peeling, while creases prevented the squashing of silicone during bending. Additionally, the researchers found that wrinkles in the silicone prevented skin tearing. With a perfected design, the researchers built a robotic hand by arranging two fingers in a Y pattern and a third finger as an opposing thumb. Six images are taken when the hand grasps an object and is sent to a machine-learning algorithm to identify it. The tactile sensing on all fingers allows rich tactile data to be gathered from a single grasp.

Researchers believe adding sensing to the palm may improve tactile distinctions. Researchers are working on enhancing hardware to reduce silicone wear and add more thumb actuation to increase task variety.

Reference : Sandra Q. Liu et al, GelSight EndoFlex: A Soft Endoskeleton Hand with Continuous High-Resolution Tactile Sensing, arXiv (2023). DOI: 10.48550/arxiv.2303.17935