AI is using more power than current chips can handle. Researchers are testing brain-inspired designs that could change how computers process and store information.

Researchers at University of Missouri say they have identified a key factor in designing brain-inspired computer hardware that could dramatically reduce the energy demands of artificial intelligence, a finding that may help address the growing power consumption of data centers.

The team found that the performance of neuromorphic transistors—electronic components designed to mimic the brain’s synapses—depends heavily on the ultrathin interface where the semiconductor meets the insulating layer. The discovery establishes new design guidelines for building AI hardware capable of learning while using far less power.

The findings could have broad implications as artificial intelligence pushes conventional computing toward an energy limit. AI systems require increasing amounts of computing power, and the electricity demand of data centers has raised concerns about whether current chip technology can scale sustainably through the decade.

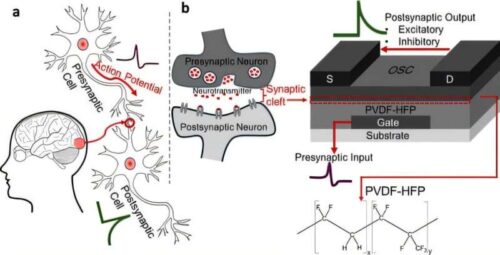

The Missouri researchers are developing organic transistors that combine memory and processing in a single device, an approach modeled on how the brain’s synapses work. Unlike conventional computers, which separate memory and computation into different units, neuromorphic systems perform both functions simultaneously, reducing the energy lost in constant data transfers.

That architecture mirrors one of the brain’s biggest advantages: efficiency. The human brain can perform complex cognitive tasks while consuming about 20 watts of power, far less than conventional computers performing similar operations.

To test their approach, the researchers evaluated several organic materials that appeared nearly identical in structure. But when integrated into synaptic transistors, the materials performed differently, revealing that device behavior depends not only on the material itself but on how it interacts with the surrounding structure at the molecular level.

The work reflects a broader push in neuromorphic computing, a growing field focused on designing hardware based on the brain’s architecture rather than traditional chip designs. Researchers say such systems could help sustain future advances in AI by reducing the energy costs of increasingly complex computation.

For decades, conventional computing has relied on transistors that separate memory from processing, forcing data to move back and forth between the two. That bottleneck slows performance and increases power use—an inefficiency neuromorphic designs aim to eliminate by merging both functions into a single device.