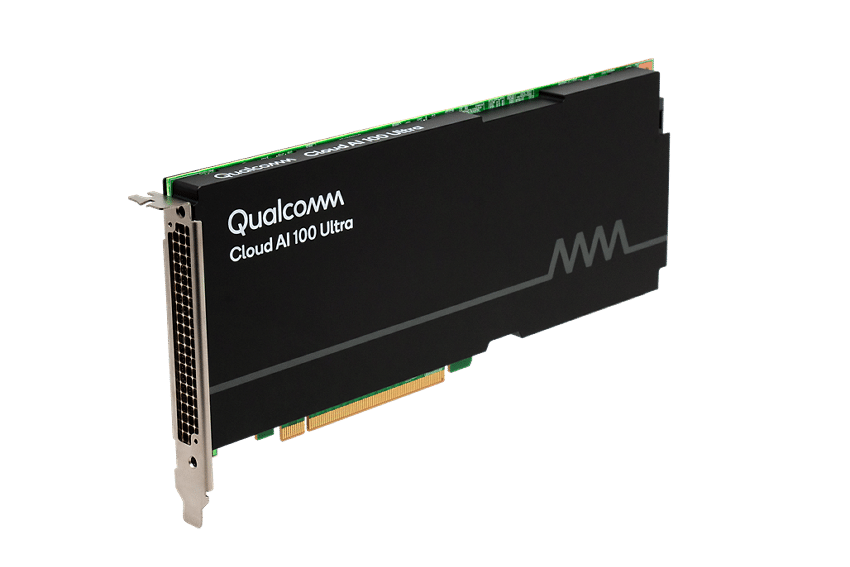

“With the ability to deploy a staggering 100 billion parameter model on a single-width, energy-efficient 150W card, it sets a new standard in the industry.”~ Andrew Feldman.

Qualcomm has introduced the Qualcomm Cloud AI 100 Ultra, the latest addition to its lineup of cloud artificial intelligence (AI) inference cards, specifically designed to handle generative AI and large language models (LLMs).

This new card is expected to have up to four times the performance of its predecessor using Qualcomm’s advanced AI cores. It efficiently supports models with 100 billion parameters on a single 150-watt card, and models with 175 billion parameters with two cards. Larger models can be managed by combining multiple Qualcomm Cloud AI 100 Ultra cards using the Qualcomm AI Stack and Cloud AI SDK.

As a programmable AI accelerator, the Qualcomm Cloud AI 100 Ultra adapts to recent AI advancements and data formats. It seamlessly integrates with the Qualcomm AI Stack, facilitating easy model porting and optimization.

Hewlett Packard Enterprise (HPE) plans to incorporate the Qualcomm Cloud AI 100 Ultra in its HPE ProLiant DL380a Gen 11 server, which is designed for generative AI workloads, including natural language processing (NLP).

“To unlock value from generative AI, enterprises will require an AI-native architecture that is purpose-built to support any part of their journey, including inferencing. In collaboration with Qualcomm, we look forward to offering our customers a compute solution that is optimized for inference and provides the performance and power efficiency necessary to deploy and accelerate AI inference at-scale.” said Justin Hotard, executive vice president and general manager of high-performance computing, AI and labs at HPE.

Andrew Feldman, chief executive officer of Cerebras, praised the Qualcomm Cloud AI 100 Ultra for its capabilities, energy efficiency, and performance, particularly in combination with Cerebras’ training technology.

In various applications, the Qualcomm Cloud AI 100 Ultra delivers two to five times the performance per total cost of ownership (TCO) dollar over competitors in generative AI, LLMs, NLP, and computer vision workloads. It provides a balance of price-performance, power-efficiency, scalability, and security, making it an ideal solution for organizations aiming to leverage AI for transformative outcomes while supporting sustainability goals.