From flowcharts to firmware, the embedded design pipeline is evolving. This article examines AI’s growing influence on embedded design workflows.

This article is based on a presentation last year at EFY Expo in Pune titled ‘Harnessing AI to Consolidate and Scale Embedded Systems & IoT Innovations,’ by Tapan Kapoor, Co-Founder, Aviconn Solutions Pvt Ltd. It has been transcribed and curated by Saba Aafreen, Technology Journalist at EFY.

My focus is on some generative AI experiments we conducted in our in-house R&D. Seeing very promising results, I felt it was worthwhile to share them here with a relevant audience that could potentially use this technology. With my experience, I hope to spark interest and help someone take these ideas forward. Let’s dive in, as most of us are familiar with AI already.

The fusion of AI and embedded designs

A typical embedded system design begins with defining requirements and the intended application. Requirements may exist as text or remain conceptual, forming the starting point. From there, the system is broken down into hardware and software needs.

The designer’s experience plays a key role in selecting the microcontroller, peripherals, power constraints, and timing requirements. These decisions ultimately shape the hardware and software components of the system.

This is where generative AI can help. In other design spaces, complete executable models in C code are already being created directly from requirement descriptions. The system can then be implemented, executed on the target microcontroller, and analysed for bottlenecks. This approach is now making its way into embedded system design.

The advantage is that components that are slow or resource-intensive can be optimised, moved to hardware, or adjusted. Traditionally, breaking a system into hardware and software has been a complex and time-consuming process.

Generative AI automates many of these steps. Starting from a textual description, large language models infer an internal representation that the machine can understand, enabling faster translation from requirements to functional system design.

That intermediate representation can then be simulated on available microcontrollers to identify bottlenecks or power challenges. Based on this feedback, the system can be fine-tuned iteratively, with iterations largely handled by the machine. Existing solutions already manage about 85 to 90 per cent of this process, which is sufficient to save significant engineering effort and reduce time to market.

From text to silicon

Once the system is roughly divided into hardware and software, the next step is to build and validate these components. The hardware is developed, the software is executed on it, and adjustments are made as necessary, refining the system as the design moves to the next stage.

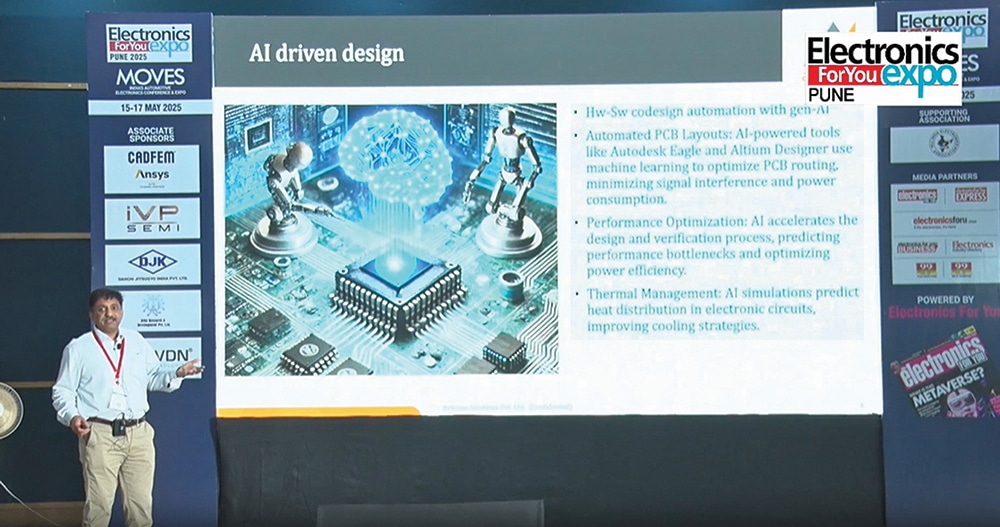

The next level involves hardware schematics, where the functional description generated earlier serves as the foundation for the design. Based on this description, generative AI can help create initial schematic designs using learned libraries and prior design knowledge.

From these schematics, combined with the corresponding software, a complete system can be created. AI can also support the transition from schematic to PCB layout. Many tools already assist in this step, and some are freely available as developers continue to improve their solutions. To some extent, these tools also support schematic entry and can handle first- or second-level PCB layout. While they are not yet mature enough to produce a fully commercial-grade PCB, they still save significant effort and time.

In parallel, these tools optimise performance during hardware–software partitioning and while refining the schematic. Thermal management is another key aspect of this stage. Projects can fail due to inadequate thermal design. For example, a projector engine developed over 20 years ago generated significant heat, causing the commercial product to overheat—a challenge many engineers may have encountered. Generative AI can assist by assessing heat generation from previous designs and predicting thermal challenges as designs move from schematic to PCB, making thermal management a valuable byproduct of the workflow.

When AI brews your coffee

Please help with following – how to automatically generate pcb desgin file,

Circuit design includes automated generation, component selection, and bill of materials (BoM) creation. PCB design incorporates intelligent placement, routing, thermal management, and power and ground plane optimisation.