When a human being starts learning in the early stages of life, every vision or simulation that takes place is recorded, compared and analysed almost instantaneously in the mind. It is complex, and we are gifted with a framework that helps us connect, recall and act to events.

In the machine world, intelligence must be taught to otherwise dumb machines. It is now possible to create a framework for them to think like us. Engineers have been working round the clock to perfect this, and as a result, software such as Caffe provide a gateway to deep learning.

Deep learning is a machine learning framework. To imbibe intelligent decision making into machines, deep learning smart algorithms must be devised. In short, it is the class of machine learning algorithm that forms a machine learning framework using which it obtains and processes real-life events.

Techniques include teaching and eventually creating a medium of interaction between man and machine. Machine vision and natural language processing are hot topics today, and any developer can play around with Caffe.

It is Caffe and it is brewed

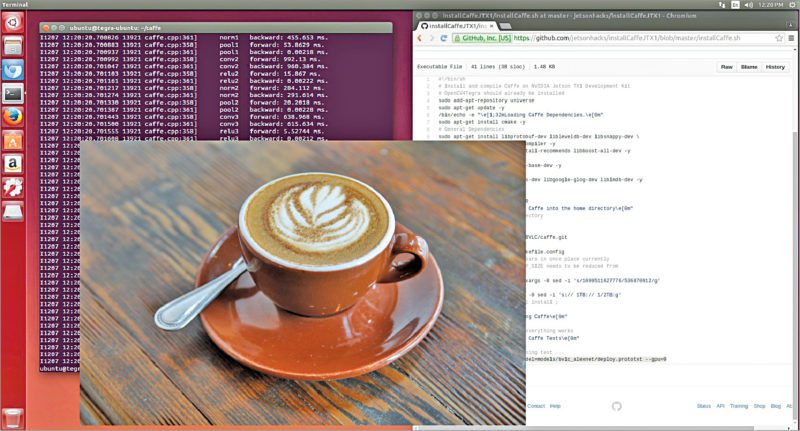

Caffe is a deep learning framework. Its mantle circles around improving visual based image and video processing in optical devices such as cameras. Deep learning techniques can be combined with Caffe framework, and there are limitless possibilities that visual analytics provide. These could be your virtual eyes and brain, among others, for which the credit goes to advancements in sensor technology, high-performance graphic processing and evolving neural networks using advanced algorithms in this field.

How Caffe is brewed.

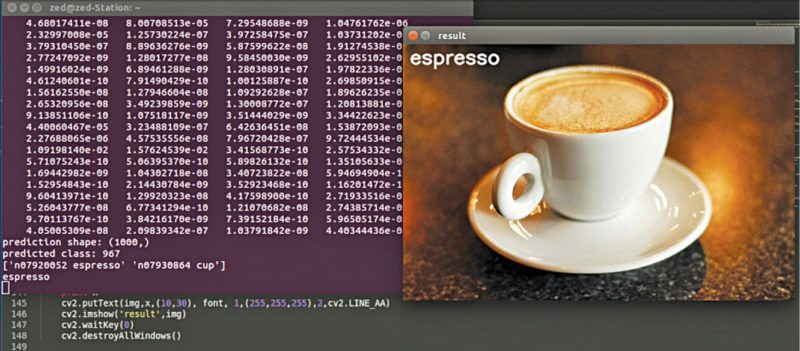

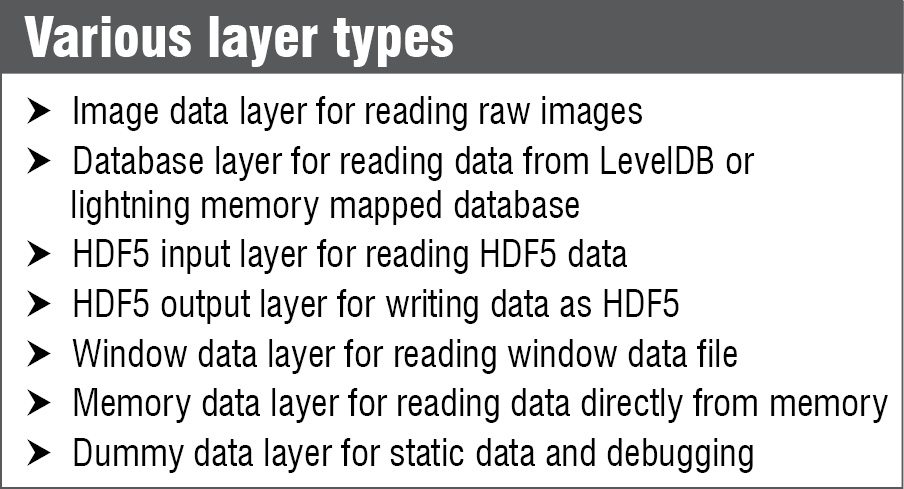

Data in the form of images and videos is what Caffe dines on. Data enters Caffe through data layers that lie at the bottom of nets. In some cases, it is sourced from databases (LevelDB or lightning memory mapped database) directly from memory or from files on the disk in HDF5 format, depending upon the use cases, and Caffe could be used to build models on it.

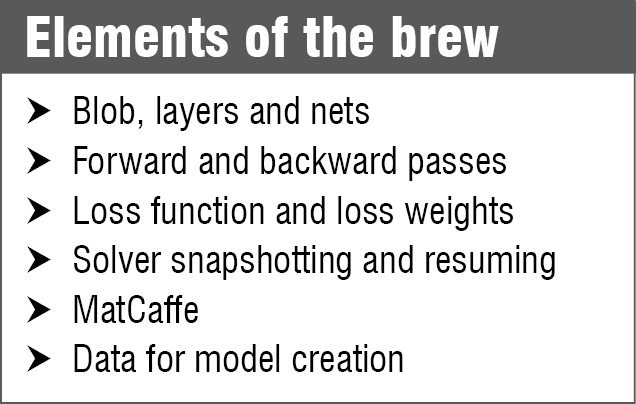

The models of Caffe work on deep networks that are made up of interconnected layers comprising chunks of data. Caffe has blobs or blob arrays that are used for storing, communicating and manipulating the information. It defines it in a net layer-by-layer model schema. The details of blob describe how information is stored and communicated in and across layers and nets because it is an N dimensional array.

The net follows the collection and connection of layers, which have two key responsibilities: constituting a forward pass for taking inputs and producing an output. The backward pass takes the gradient with respect to output, and computes the gradients with respect to parameters and to inputs, which are, in turn, back-propagated to earlier layers. All you need to do is define the setup, forward and backward passes for the layer, and it is ready for inclusion in a net.

Vision based use cases

Various uses cases of Caffe include surveillance and vision based alerting using Cloud and computer vision. Vision layers make room for the images from the real world as inputs and analyse colourations and spatial structures. These layers work by applying a particular operation to a region of the input and a corresponding region of the output, treating the spatial input as a vector with a specific dimension.

Getting down to the basics of video capturing, layers such as convolution get into action and are responsible for producing one feature map in the output image. Convolution is an important operation in signal and image processing, and in Caffe, pooling, cropping, edge detection and transformation processes take place in a sequence, followed by deconvolution and loss of learning.

Caffe learns about the real world

Software like Caffe learn about the real world by specifying a certain level of badness, driven by a factor called loss function. A loss function specifies the goal of learning by mapping parameter settings to a scalar value. Now, the goal of learning in machines is to find a setting of weights that minimise the loss function.

In Caffe, loss is computed by the forward pass of the network where each layer takes a set of input (bottom) blobs and produces a set of output (top) blobs. In simple words, the model works by comparing the loss while generating the gradient and then incorporating the gradient into a weight update that tries to minimise the loss. This is done by a solver in Caffe.

Significance of deep learning

Machines have made our lives easier and now we are one step closer to making these think. With deep learning, deep networks and tools like Caffe, it is now possible to make machines learn the way we humans have learnt in practical applications of speech recognition and image classification, among others.

In reality, we are building features into machines using such specialised software and letting these connect to smart, artificial neural networks and frameworks to learn for themselves.

Significantly, these machines not only learn the features but run based on real-world events, where Caffe could work on cameras for combining, classifying and, to an extent, making intelligent decisions like informing concerned authorities through email or message alerts in places like a bank ATM, in case of unrecognised movement or threat.

With improvements in processing power and graphics, the bottlenecks of computation are being overcome over the years. Deep learning has already branched out to many other use cases. Text analytics, time-series analytics, image processing and real-time threat detection using video or motion detection are areas that are using deep learning techniques.

Download the latest version: click here