MLPerf results show how new GPUs and system-level design are enabling faster, scalable inference for large language models and emerging generative AI workloads in real deployment environments.

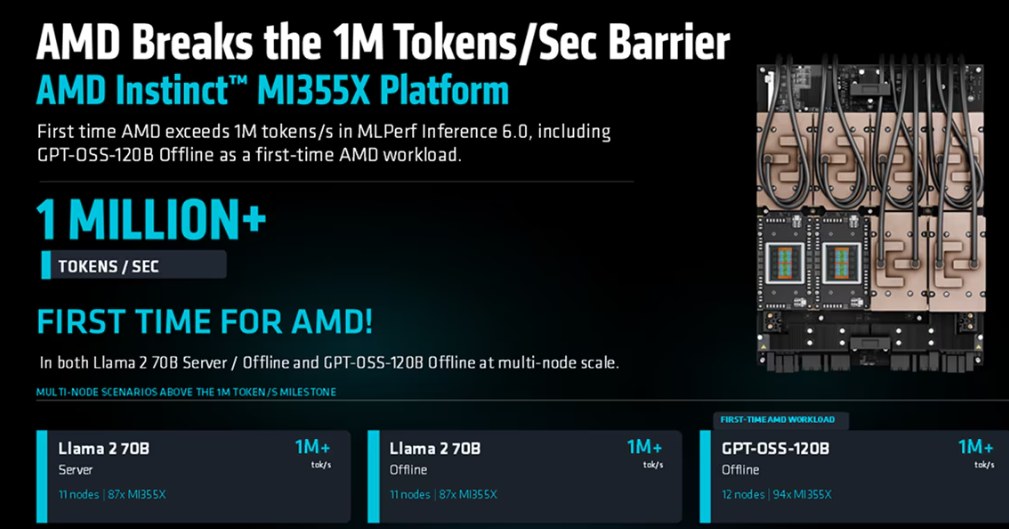

AMD has reported a major leap in AI inference performance with its latest MLPerf Inference v6.0 results, crossing the 1-million tokens-per-second threshold and signalling growing maturity in production-scale generative AI infrastructure.

The milestone was achieved using AMD Instinct MI355X GPUs running large language models such as Llama 2 70B and GPT-OSS-120B across multinode clusters. The results highlight a shift from single-node benchmarking to rack-scale, real-world deployment metrics in which throughput and latency define usability.

At the system level, AMD reported over 1 million tokens per second in both server and offline inference scenarios, positioning its hardware for high-demand AI services supporting large user bases. The company also demonstrated a 3.1× generational performance uplift over its previous AMD Instinct MI325X, underscoring rapid iteration in its CDNA-based accelerator roadmap.

The benchmark round itself reflects broader industry changes. MLCommons introduced new workloads in v6.0, including the GPT-OSS-120B model and text-to-video inference tests, aligning benchmarks with emerging generative AI use cases.

AMD’s submission also emphasised software-hardware co-design through its ROCm stack, enabling first-time deployment of new models while maintaining competitive performance. The company scaled inference across up to 12 nodes and supported diverse workloads, ranging from large language models to multimodal and video-generation tasks.

In a competitive context, MLPerf results show AMD narrowing the gap with rival GPU platforms, with cluster-scale configurations delivering performance close to leading systems in certain workloads.

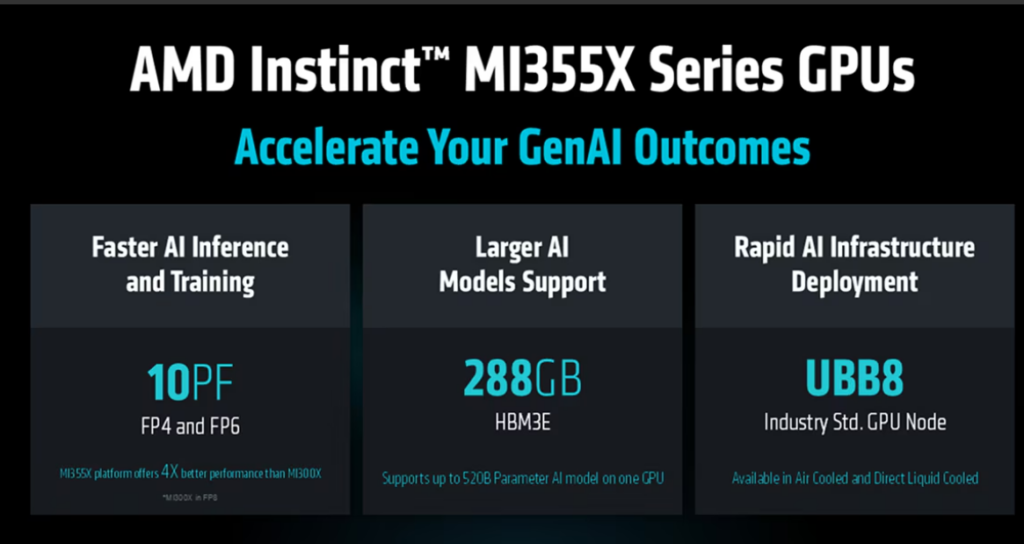

From an electronics perspective, the results underscore how AI accelerators are evolving beyond raw compute to tightly integrated systems that combine high-bandwidth memory, low-precision compute formats such as FP4, and distributed interconnects. These elements are increasingly critical for scaling inference efficiently across data centres.

The broader implication is a transition toward production-ready generative AI infrastructure, where performance is measured not just by peak compute, but by sustained, scalable throughput. With future rack-scale systems and next-generation accelerators already in development, MLPerf Inference 6.0 signals intensifying competition in AI silicon—and a shift toward deployment-focused benchmarking.