A rack scale CPU platform emerges to support always running AI systems improving coordination and efficiency across large distributed computing environments

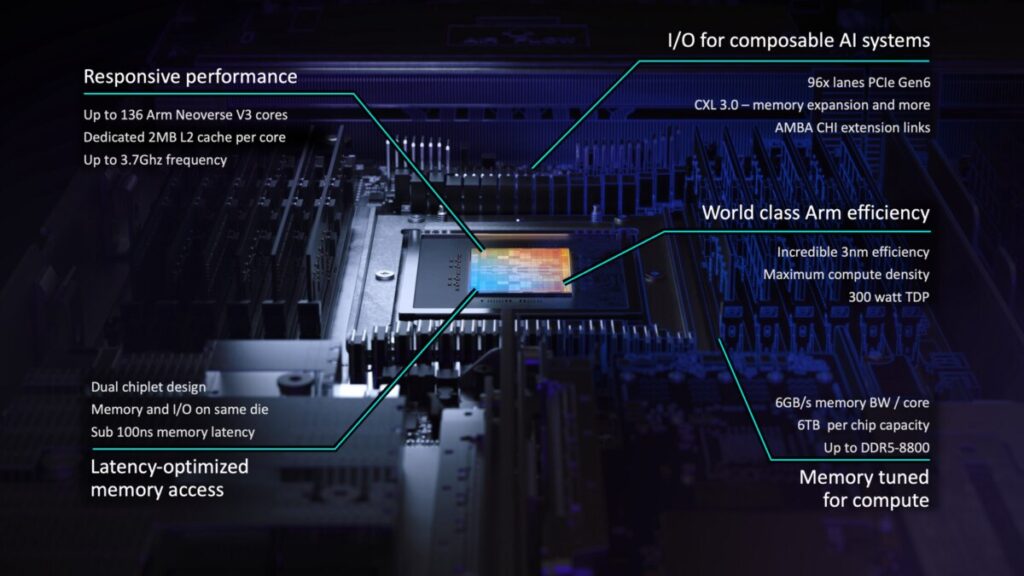

Arm Holdings has introduced the Arm AGI CPU, a new class of production-ready silicon built on its Neoverse platform, marking a major shift as the company moves beyond IP licensing to deliver its own data center processors. Designed specifically for the emerging era of agentic AI, the CPU targets large-scale cloud infrastructure where software agents operate continuously and coordinate complex workloads in real time.

As AI systems evolve from user-driven interactions to autonomous, always-on operations, the role of the CPU is becoming central to orchestrating distributed compute. In modern AI data centers, CPUs are responsible for managing accelerators, scheduling workloads, handling memory and storage, and enabling seamless data movement. The CPU is engineered to meet these demands, supporting high-performance, massively parallel workloads across dense rack-scale deployments.

The processor delivers key benefits in performance and efficiency for next-generation AI infrastructure. Arm claims more than 2x performance per rack compared to current x86-based systems, driven by higher usable threads, improved per-thread efficiency, and optimized resource allocation. This enables sustained performance under heavy workloads while staying within power and cooling limits of modern data centers.

From a system design perspective, the CPU enables higher compute density and scalability, supported by high-performance Arm Neoverse V3 cores and architecture-level optimizations across memory bandwidth, I/O, and operating frequency for sustained parallel execution. A reference configuration features a dual-node 1OU server design with 272 cores per blade, scaling up to 8,160 cores in a standard air-cooled rack. In collaboration with Supermicro, Arm also supports a liquid-cooled configuration delivering over 45,000 cores per rack. The platform is further backed by an Open Compute Project-compliant reference server design to accelerate ecosystem adoption.

Santosh Janardhan, Head of Infrastructure at Meta, says, “We worked alongside Arm to develop the Arm AGI CPU to deploy an efficient compute platform that significantly improves our data center performance density and supports a multi-generation roadmap for our evolving AI systems.”

With the Arm AGI CPU, Arm is extending its role in data center infrastructure, providing a scalable and efficient compute foundation for AI-native workloads.

Click here for the official announcement.