4. Implementing C Language for the Image creation using the Sensor Matrix data

The data retrieved in the previous step is now utilized for the Image creation which will give a pictorial representation of where, in the 2D plane of the touch panel, the touch was detected which is done using C programming.

Rounak elaborates,” This image is actually made by creating scan-lines i.e. lines between the receiver and the transmitter, made when the communication between them is successful. These scan-lines are made in the image for each possible Rx-Tx pair in the sensor matrix. A sample image made during our implementation using dummy data in which every possible Rx-Tx pair is communicating successfully as no touch/obstruction is shown below in Figure – 1.”

5. Creating AVI(Audio video interleave) Video file from the frames (Images of scan-lines) obtained

Now, the next step attempts to make an avi video using the frames from the previous step. In case of a Touch sense on the touch panel, for each LED there is generated one image as in Figure- 2 and the required frame is the one obtained by the sensor data corresponding to all LEDs actuated. However, the final image is that which is obtained after overlapping all the frames obtained corresponding to each lit LED which, in turn, contains a touch point.

6. Creating Video interface with Community Core Vision (CCV)

Mordhwaj explains,”Up to this stage, we have a video which now is capable of showing where actually the touch was detected (i.e. Blob) w.r.t the Sensor Matrix frame. This information at this stage is now very useful as these videos are ready to be interfaced and fed to the Image Processing software: CCV– Community Core Vision – this software takes the video input stream made by the frames of blob detection images from touch panel and outputs the tracking data which is useful for mouse movements. So we now have the video being interfaced with the software and now we require a software driver which can synchronize the blob position figured out by CCV with the mouse movements which leads us to the last step.”

7. TUIO Mouse Driver Implementation

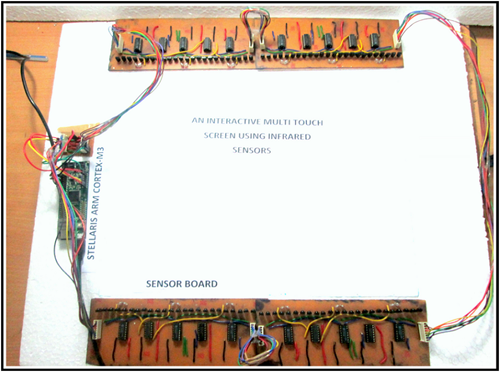

TUIO is an open framework and platform to support the tangible user interface. The TUIO allows the transmission of meaningful information extracted from the tangible interfaces including touch events and object specifications.

Vasuki chimes in,”This protocol enciphers the control data from a tracker application (e.g. based on computer vision) and sends it to any client algorithm that deciphers this information. This combination of TUIO trackers, protocol and client implementations allow the rapid development of tangible multi-touch interfaces. Finally we are now at a stage to successfully run our Touch user interface having mouse moves well synchronized with the Finger movements and gesture.”

Challenges faced

I cannot help but quiz them on the level of challenges associated with such a complex idea being brought to fruition. Rounak says,” It was actually really hard to design the hardware because that aspect, along with the complicated signal and supply management were the most critical issues that were to be handled in order to control the sensitivity of the touch sensitive panel. Also the problem of the propagation delay was encountered as the signal input from the sensors has to pass through hardware and software algorithm. But somehow we managed to shrink the code so as to enhance the speed of the operation and the whole interface and that helped a lot in the completion.”

What lies ahead

With such work ethic and abundantly evident innovativeness, the talk moves on to what kind of features they would like added on to their design. Patel adds,” We are still on our way to increase its resolution and its compactness. Further we are also planning to add more multi-touch features to this panel like scrolling, zooming etc. We have also been brainstorming on ways to make the design more compact so that it fits easily on the screen of a computer. To decrease the thickness of the touch panel, we are still searching for smaller IR sensors. This way we will also be able to reduce the size of the PCB for the sensor array.”