The logic behind the architecture

The architectural logic shows substantial improvement over its predecessors, in terms of control logic partitioning, workload balancing, clock-gating granularity, instruction scheduling, number of instructions issued per clock cycle and more. Reduced instruction latency has shown improvement in utilisation and throughput. There are also specialised integer instructions to accelerate pointer arithmetic.

How Maxwell remembers

The Maxwell architecture sports a larger, dedicated shared memory, separating it from the cache. Shared memory or unified memory, as it is called, is a pool of managed memory that is shared between the CPU and GPU, bridging the CPU-GPU divide. A single pointer points to these locations and both the processing units access this. There is 96KB of shared memory in each SMM, while maintaining the maximum shared memory per thread at 48KB. Further, the shared memory atomic operations for 32-bit integers and 32-bit and 64-bit compare-and-swap (CAS) features improve the efficiency of implementing options shared by the threads of a block, like list and stack data structures.

Also, the maximum number of active thread blocks per shared memory sees an increase from 16 to 32, resulting in improved occupancy of kernels running on small thread blocks. The amount of L2 cache memory in the series has been increased to 2048KB from the existing 1536KB in the Kepler Series, reducing the required memory bandwidth. A reduction in memory bus size from 192-bit to 128-bit has resulted in reduced power consumption.

What Maxwell offers to graphics

Lighting sees a new dimension in the form of the NVIDIA VXGI. Brought forward by Cyril Crassin in 2011, “VXGI uses a 3D voxel data structure to capture coverage and lighting information at every point in the scene. This data structure can then be traced during the final rendering stage to accurately determine the effect of light bouncing around in the scene,” in the words of NVIDIA.

A new approach

A new anti-aliasing approach, the multi-frame sampled anti-aliasing (MFAA) interleaves AA sample patterns both temporally and spatially. This results in a high quality image, while maintaining low cost and good performance at the same time. Further, features like dynamic super resolution, conservative rasterisation, viewport multicast and sparse texture add to the zing of the graphics.

Parallelism taken to a new level

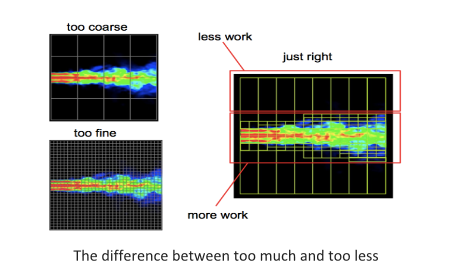

Another feature of interest is dynamic parallelism, which brought out a solution to expressing nested parallelism. Introduced in compute unified device architecture (CUDA) version 5.0, threads running on the device can now launch kernels, while launching more threads too. What this means is that, so far, the number of threads used have been uniform throughout the image. With dynamic parallelism, the number of threads can be varied within an image. In effect, the intensity of computation can be increased exactly in the area required.

parallelism/)

The latest Release

The latest release of the Maxwell with the GeForce GTX 960 graphics card, plays up to its reputation. This card is the first truly mainstream iteration of the Maxwell architecture, enabling it to hit the right cord in the 1080p enthusiast segment.

Is it really as good as it is claims to be

The processor architecture has stood up to its powerful yet stunningly power-efficient tag, according to Brad Chacos of PC World. The graphics card boasts of 1024 CUDA cores, 16 streaming multiprocessors, 32 ROP units, and 64 texture units. It is powered from a single 6-pin power connector while it has a scant 120-watt thermal design power (TDP). Chacos further states that it runs cool and quiet even under heavy loads, opening the door for nifty sound-saving tricks and beastly overclocks. The card sports just a 2GB RAM with a 128-bit memory bus, which the improvements in cache and engine supposedly make up for.

According to Anandtech.com, the MFAA Technology, although having a few flaws if compared to the multi-sample anti-aliasing(MSAA), is well-appreciated. Considering the cost and features, the MFAA, standing alone, is deemed to be good enough. Plus, with the 28nm technology being stagnant for the time being, the entire onus of improvement falls on the design. NVIDIA has done well with the Maxwell in terms of power and performance, and that is a huge step in the right direction. Its main competitor, AMD, has huge shoes to fill.

What next

Wait for Pascal, the next Maxwell! With 3D memory and NVLink, it is due in just a year.