A radically different processor design embeds entire AI models into silicon, delivering extreme speed and cost efficiency for next-generation inference workloads.

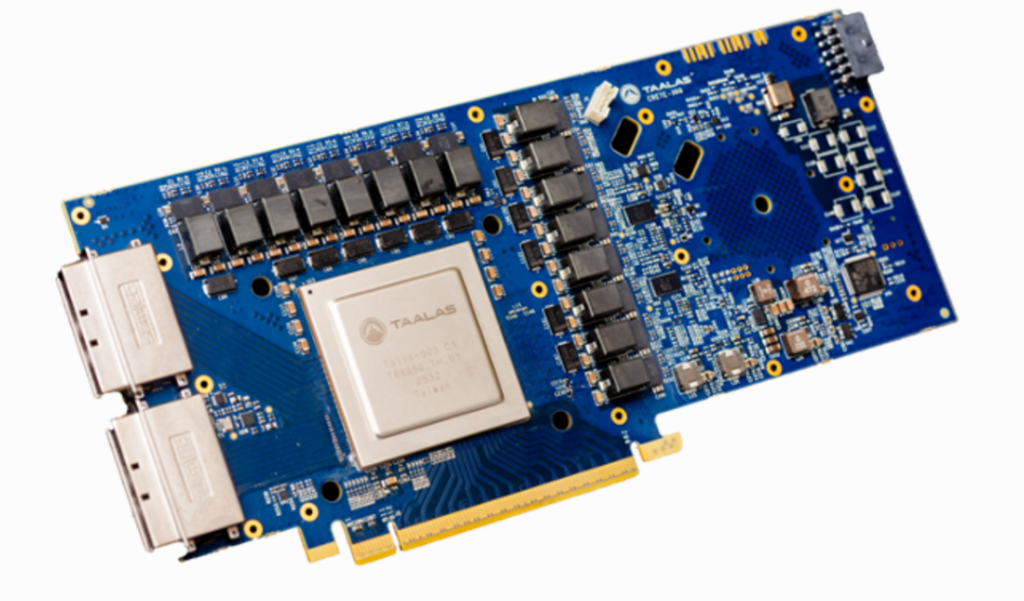

A new AI processor architecture by Taalas is challenging conventional chip design by embedding entire AI models directly into silicon, dramatically boosting inference performance and efficiency. The approach eliminates the need for traditional software execution layers, enabling near-instant responses and significantly lower operating costs.

Unlike GPUs and general-purpose AI accelerators that prioritise flexibility, this architecture is built for single-model specialisation. Each chip is custom-designed for a specific AI model, hardwiring its parameters and weights into the silicon itself. This shift enables performance gains of one to two orders of magnitude over existing solutions.

The key features are:

- Hardwires a full AI model (weights + parameters) directly into silicon

- Delivers 10–100× higher inference performance than GPUs

- Sub-millisecond latency with ~14K+ tokens/sec throughput

- Up to 100× lower cost per token for inference workloads

- Rapid chip creation cycle (~2 months per model)**

The processor can be developed in as little as two months after a model becomes available, allowing rapid deployment of optimised hardware. Early demonstrations show sub-millisecond latency and throughput exceeding 14,000 tokens per second on popular language models, making outputs appear almost instantaneous.

This performance leap also translates into major economic advantages. Inference costs drop to fractions of a cent per million tokens—far below those of GPU-based systems—potentially enabling cloud providers to handle far more queries at lower cost.

However, the design comes with trade-offs. By focusing on a single model, the chip sacrifices programmability and cannot be repurposed for other workloads. While limited flexibility may restrict broader adoption, the architecture represents a significant step toward extreme specialisation in AI hardware.

The development signals a growing industry shift toward domain-specific silicon, where performance and efficiency gains outweigh the need for general-purpose computing. If widely adopted, this model-centric approach could reshape AI infrastructure, particularly for high-volume inference workloads.